7 Agentic AI Attacks Nobody's Talking About (Yet)

Fragmented virus payloads, Denial of Wallet compute exhaustion, agent supply chain attacks, cross-agent privilege escalation, 22-second breaches, self-modifying agentic malware, and the consent revocation race. The threats that will define 2026.

The threats that will define 2026 — and why traditional security can’t stop them.

We’ve spent the last year watching agents get smarter. We should have been watching the attacks get smarter too.

The threat landscape for agentic AI has shifted from “prompt injection” (tricking a model) to exploiting the infrastructure around it: the memory, the compute, the delegation chains, the billing, the tool ecosystem. These aren’t hypothetical. Some have already happened. Others are inevitable before the year is out.

Here are seven predictions for agentic AI attacks in 2026 — the ones that aren’t in your threat model yet.

1. The Fragmented Virus: Payloads That Assemble Themselves

Prediction: By Q4 2026, we will see the first documented case of a fragmented malware payload distributed across an agent’s long-term memory, reassembled and executed days after initial injection.

Traditional malware detection looks for complete payloads. A virus signature, a known exploit pattern, a suspicious binary. But agents have something traditional software doesn’t: persistent memory across sessions.

The attack works like this:

- Fragment A arrives in a support ticket the agent processes on Monday. It looks like a benign code snippet.

- Fragment B arrives in a Slack message on Wednesday. It looks like a configuration template.

- Fragment C arrives in a GitHub comment on Friday. It looks like a documentation example.

Each fragment passes every scanner. Together, they form a complete exploit.

When the agent’s reasoning chain touches all three fragments in the same context window — triggered by a routine task that happens to reference all three sources — the payload assembles itself. Not because someone triggered it. Because the agent’s own associative reasoning connected the dots.

This isn’t speculation. Palo Alto Networks documented 1,184 malicious skills on the OpenClaw marketplace. Christian Schneider’s research on persistent memory poisoning showed how planted instructions survive across sessions and execute when triggered. OWASP added ASI06 (Memory & Context Poisoning) to its Top 10 for Agentic Applications 2026.

The Gemini memory attack already demonstrated delayed tool invocation triggered by common words like “yes” or “sure” — words that appear in virtually every conversation. MINJA research shows over 95% injection success rates against production agents.

What’s missing: No existing security product scans for payload fragments that are individually benign but collectively dangerous. Traditional antivirus signatures, static analysis, and even prompt injection filters all evaluate inputs in isolation. We need cross-session, cross-source payload reassembly detection — and nobody is building it yet.

2. Denial of Wallet: The $400M Cloud Leak

Prediction: “Denial of Wallet” will overtake traditional DDoS as the primary availability attack against agentic AI deployments by late 2026.

A DDoS attack tries to crash your server. A Denial of Wallet attack doesn’t need to. It just needs to spend your money.

In the era of auto-scaling cloud infrastructure and usage-based AI pricing, your systems are designed to be un-crashable. If traffic spikes, more instances spin up. If an agent needs more tokens, the API serves them. The meter keeps running.

An attacker doesn’t need to overwhelm your servers. They just need to trick an agent into a recursive reasoning loop — repeatedly calling paid APIs, spawning sub-agents, provisioning cloud resources — while trying to solve an impossible task. Or a task that looks solvable but requires exponentially more compute at each step.

The numbers are already staggering. AnalyticsWeek reports a collective $400 million in unbudgeted cloud spend across the Fortune 500, attributed to the “Predictability Gap” in agentic AI costs. And that’s just the accidental overruns.

Now imagine the intentional ones.

The attack vector nobody’s modeled: An adversary compromises a single MCP tool server. Every agent that connects to it receives a subtly modified tool response that causes slightly more compute per invocation — 15% more tokens, one extra API call in a chain, a retry loop that fires twice instead of once. Across thousands of agents, across days, the cumulative cost is millions. And it never triggers a single alert because no individual request is anomalous.

This is the agentic equivalent of a slow loris attack, but measured in dollars instead of connections.

What traditional security misses: Rate limiting protects against burst abuse. It doesn’t protect against an agent that spends $0.03 per request — a normal amount — but makes 10,000 requests per hour because it’s stuck in a reasoning loop that looks productive. You need budget-bound authorization: every agent’s compute expenditure capped by the human who authorized it, enforced cryptographically, attenuated at each delegation step.

3. Agent Supply Chain Attacks: OpenClaw Was Just the Beginning

Prediction: A major enterprise breach in 2026 will be traced to a compromised MCP server or agent skill marketplace, not to the AI model itself.

We’ve already seen the preview. Antiy CERT confirmed 1,184 malicious skills across ClawHub, the marketplace for the OpenClaw AI agent framework. Trend Micro found 492 MCP servers exposed to the internet with zero authentication.

The Model Context Protocol was built for capability first, with authentication, authorization, and sandboxing left to the implementer. And most implementers skipped all three.

This is the npm left-pad moment for agentic AI. Except the blast radius is larger because:

- Agent skills have tool access (filesystem, network, databases)

- Agents operate with human-level permissions (OAuth tokens, API keys)

- Compromised skills execute autonomously across thousands of enterprise systems simultaneously

PromptSpy — the first Android malware to abuse generative AI in its execution flow — proved the concept on mobile. The enterprise version, where a compromised MCP server silently exfiltrates data from every agent that connects to it, is a matter of when, not if.

The fix that doesn’t exist yet: We secure npm with lockfiles and hash pinning. We secure Docker with image signatures and SBOM. We secure APIs with OAuth. Who secures MCP? The answer today is: nobody. We need cryptographic MCP server identity verification — where every MCP server has a signed identity document, every tool call is authenticated, and every response is integrity-checked.

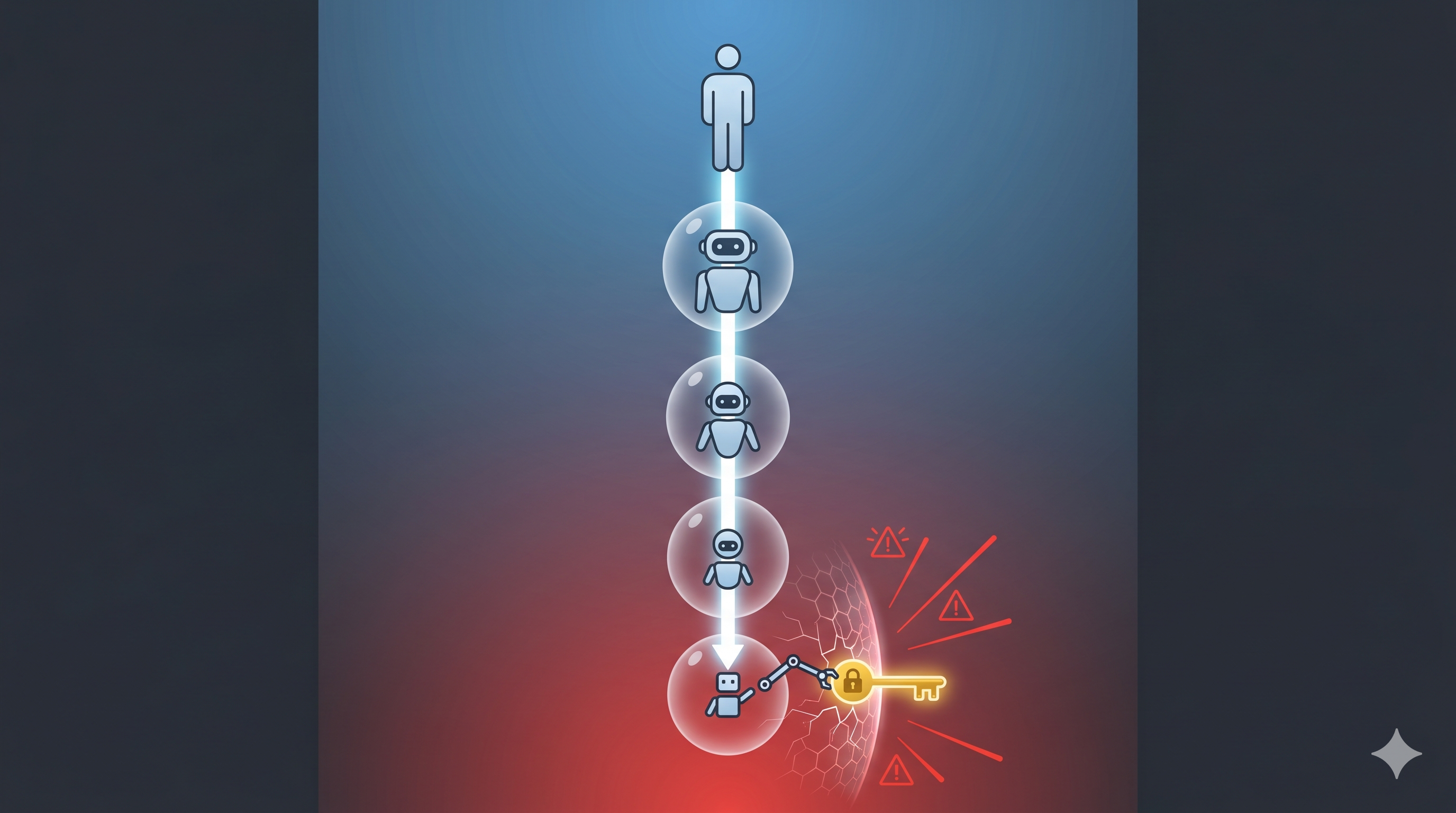

4. Cross-Agent Privilege Escalation: The Delegation Chain Attack

Prediction: The first major “agent-to-agent” privilege escalation exploit will involve an attacker-controlled agent manipulating its delegation chain to claim permissions it was never granted.

When Agent A delegates to Agent B, Agent B should get a subset of A’s permissions. That’s the theory. In practice, most delegation implementations today pass credentials, not capabilities.

The attack: Agent B is compromised (or was malicious from the start). It receives Agent A’s OAuth token. It uses that token not for the delegated task, but to call APIs that Agent A has access to but never intended to share. Agent A delegated “search flights.” Agent B used the same credential to “read all emails.”

Anthropic acknowledged this gap explicitly, publishing a framework on April 9, 2026 stating that “the model layer alone cannot secure agentic AI.” Their recommendation: enforce identity delegation at the infrastructure layer, where “agents inherit but never exceed human-level permissions.”

But inheritance without attenuation is still dangerous. If I authorize an agent to book flights with my credit card, and that agent delegates to a sub-agent to check seat availability, should the sub-agent also have my credit card? Today, in most frameworks, it does.

What’s needed: Per-hop identity with mandatory scope narrowing. Each agent in a chain gets its own cryptographic credential, provably narrower than its parent’s. The sub-agent checking seats gets read:flights. Period. Not my credit card, not my email, not my calendar.

5. The 22-Second Breach: Autonomous Attack Chains

Prediction: By end of 2026, the median time from initial exploit to data exfiltration in an AI-assisted attack will drop below 30 seconds — faster than any human SOC can respond.

Anthropic’s Mythos model produced 181 working exploits from known Firefox vulnerabilities on a standardized test, compared to 2 by the previous model. That’s a 90x improvement in a single generation.

JazzCyberShield’s research puts the current agentic breach window at 22 seconds — from initial access to lateral movement to exfiltration. Attacks now rewrite their own code, move laterally without human operators, and exfiltrate data before most security teams have finished their morning coffee.

The SecurityWeek Cyber Insights 2026 report predicts that by mid-2026, at least one major enterprise will fall to a fully autonomous agentic AI system that executes the entire attack lifecycle — from reconnaissance to exfiltration — without human involvement.

The uncomfortable truth: If the attack takes 22 seconds and your detection pipeline takes 4 minutes, you’ve already lost. The defense can’t be faster humans. It has to be faster authorization — where every action an agent takes is pre-authorized against a policy that was set before the attack began, and any deviation is blocked at the infrastructure layer, not detected after the fact.

6. The Agentic Virus: Self-Modifying Malware That Reasons

Prediction: The first truly “agentic” virus — malware that uses LLM reasoning to decide what to do next, adapting its behavior to each target environment — will be documented in production by Q3 2026.

Security Boulevard described the concept: “An AI-agent-based virus replaces static payloads with an autonomous reasoning engine. It doesn’t execute a fixed script. It reads its environment, makes decisions, and adapts.”

Traditional malware has a fixed payload. It runs the same exploit everywhere. An agentic virus would:

- Scan the target environment and choose the exploit most likely to succeed

- Modify its own code to evade the specific security tools it detects

- Decide whether to persist, exfiltrate, or pivot based on what it finds

- Communicate with other instances to coordinate a distributed attack

This isn’t science fiction. IBM’s “Bob” AI agent was documented downloading and executing malware. The leap from “agent accidentally executes malware” to “malware that IS an agent” is a single prompt engineering step.

What defenses look like: You can’t signature-match malware that rewrites itself. You can’t sandbox code that reasons about its sandbox. The defense has to be identity-first: every agent must prove who it is, who authorized it, and what it’s allowed to do — on every request, with cryptographic proof that can’t be forged. If an agent can’t prove its delegation chain back to a human, it doesn’t execute.

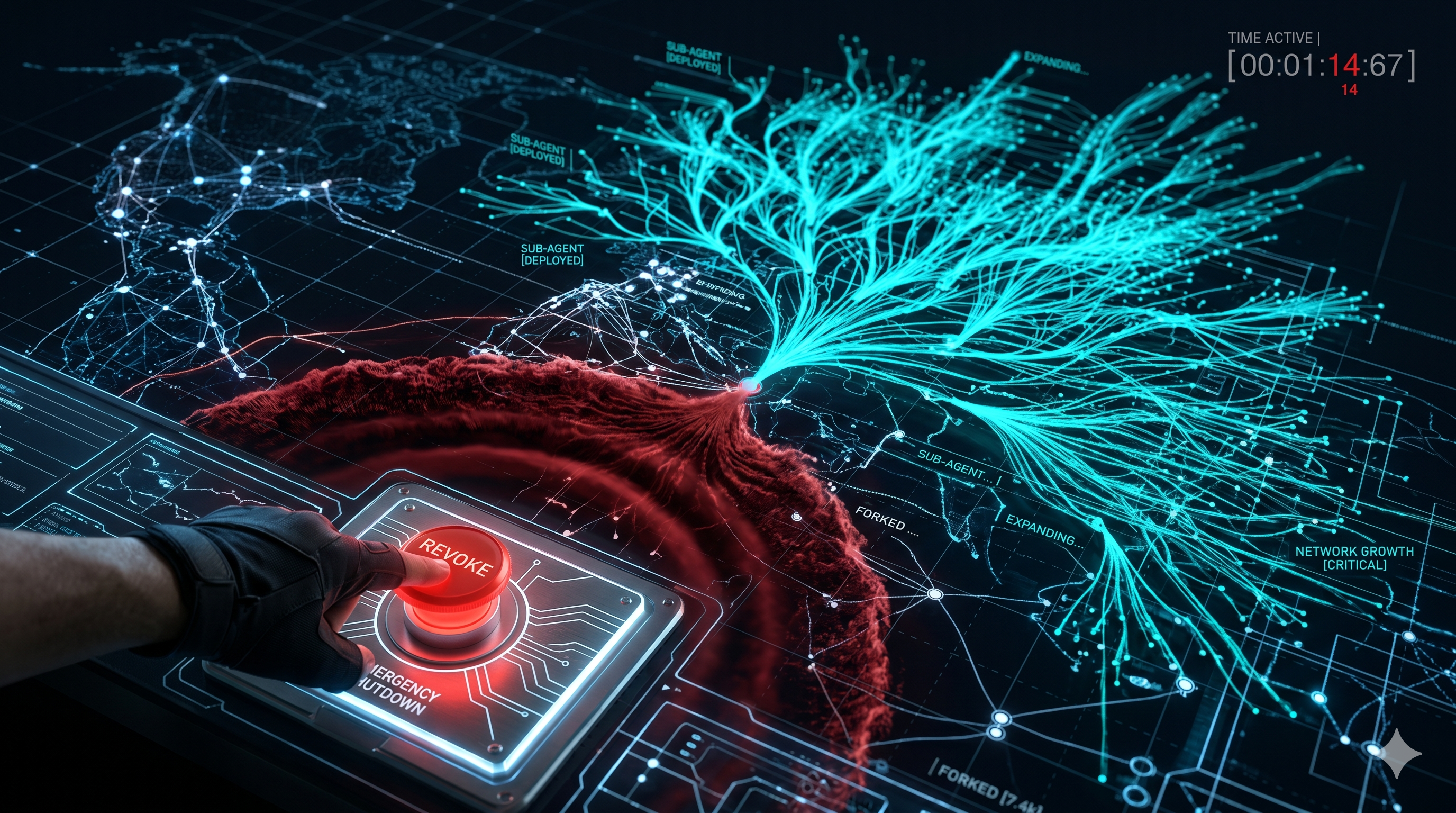

7. The Consent Revocation Race: Who Pulls the Plug on a Swarm?

Prediction: A major incident in 2026 will involve an organization unable to revoke an AI agent’s access quickly enough because the agent had already spawned hundreds of sub-agents across multiple services, each with independently valid credentials.

You revoke the parent agent’s API key. But the parent already delegated to 47 sub-agents, each with their own tokens. Those sub-agents delegated to 200 more. Each token is valid until it expires. Each sub-agent is making API calls right now, across 15 different services that don’t know about each other.

Your key revocation took 3 seconds. The cascade of sub-agent activities will continue for 29 minutes and 57 seconds — until the last token expires.

This is the “revocation problem” that certificate authorities solved 20 years ago with CRL and OCSP. But the agentic AI ecosystem has no equivalent. 48% of security professionals say agentic AI is the top attack vector for 2026, and most of them are thinking about the model. The real attack surface is the delegation infrastructure.

What’s needed: Cascade revocation that propagates instantly — revoking a parent consent must invalidate every descendant in the chain, across every service, in real time. Not when tokens expire. Not on the next refresh. Now.

The Common Thread

Every prediction above shares one root cause: agents don’t have identity.

They borrow human credentials. They share API keys. They pass tokens around without attenuation. When something goes wrong, nobody can answer: which agent did this? Who authorized it? What was it supposed to be doing? Was this action within its scope?

Traditional security — firewalls, WAFs, SIEM, OAuth — was built for a world where humans authenticate and software executes. In the agentic world, software authenticates AND executes. The human is three delegation hops away, asleep, and has no idea their calendar agent just spawned a sub-agent that’s burning through $50,000 of GPU credits trying to solve an impossible optimization problem.

The fix is identity. Real, cryptographic, per-agent, per-hop identity. Where every agent proves its delegation chain back to a human consent. Where every delegation step provably narrows scope. Where every action is bound to the authorization that permitted it.

The web solved server identity 20 years ago with TLS and DNS. It’s time to solve agent identity.

The attacks aren’t waiting.

Karthik Rampalli is the founder of Glyphzero Labs, building cryptographic authorization infrastructure for agentic AI.

Sources:

- Microsoft Security Blog: Threat actor abuse of AI (April 2026)

- Palo Alto Networks: OpenClaw Security Crisis

- Anthropic: Mythos model preview (TechCrunch)

- Anthropic: Agent security framework (Cequence)

- Anthropic: MCP donated to Linux Foundation

- SecurityWeek: Cyber Insights 2026 — Malware in the Age of AI

- Security Boulevard: The Agentic Virus

- ESET: PromptSpy — first Android malware using GenAI

- AnalyticsWeek: The $400M Cloud Leak (FinOps)

- InstaTunnel: Denial of Wallet attacks

- InstaTunnel: Agentic Resource Exhaustion

- Christian Schneider: Memory poisoning in AI agents

- arxiv: MINJA — Memory Injection Attack on LLM Agents

- Kiteworks: Agentic AI attack surface 2026

- JazzCyberShield: 22-second breach window

- Barracuda: Agentic AI threat multiplier

- Biometric Update: MCP delegation gaps

- IBM “Bob” AI downloads malware (PromptArmor)

- Check Point: Agentic AI security risks

- CSA: State of Cloud and AI Security 2026

- LevelBlue: 2026 Predictions — Agentic AI surge